Overview

Databricks is a cloud-based data platform that unifies data engineering, data science, and analytics on a single collaborative workspace, built around Apache Spark. It enables organizations to process large-scale data, build machine learning models, and perform advanced analytics efficiently. Integrated with Zeotap, the customer can push the audience created in Zeotap to their Databricks instance.Supported Identifiers

You can send any identifier or attribute of your choice from Zeotap CDP to Databricks using this integration.Note:Sending of event data is not supported currently.

Available Actions and Supported Features

The following table lists the available action types for the integration and the supported features for each action type:| Action Name | ID EXTENSION | DELETE | DELTA UPLOAD |

|---|---|---|---|

| Send attributes and identifiers to Databricks | - | - | - |

Prerequisites

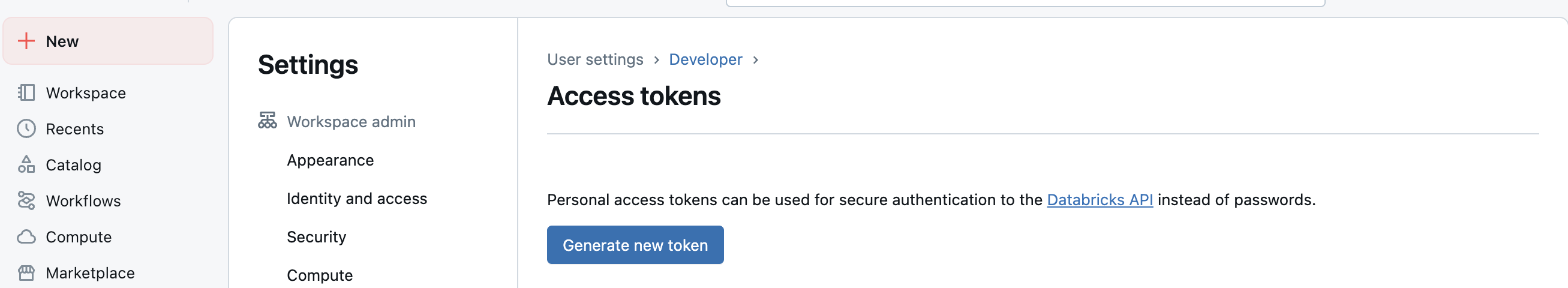

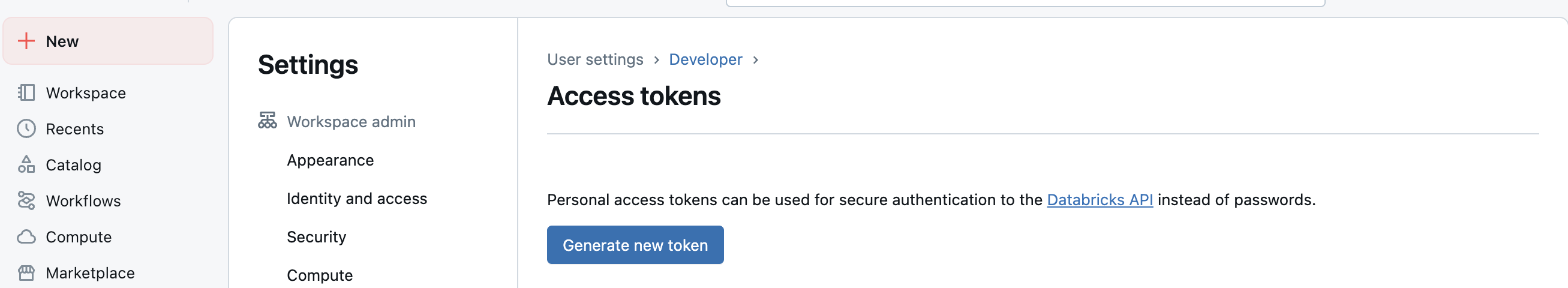

Before you create a Databricks Destination in Zeotap CDP, ensure you have the following details ready.DataBricks Access Token:Access token can be managed in Settings → User setting → Developer → Generate new token

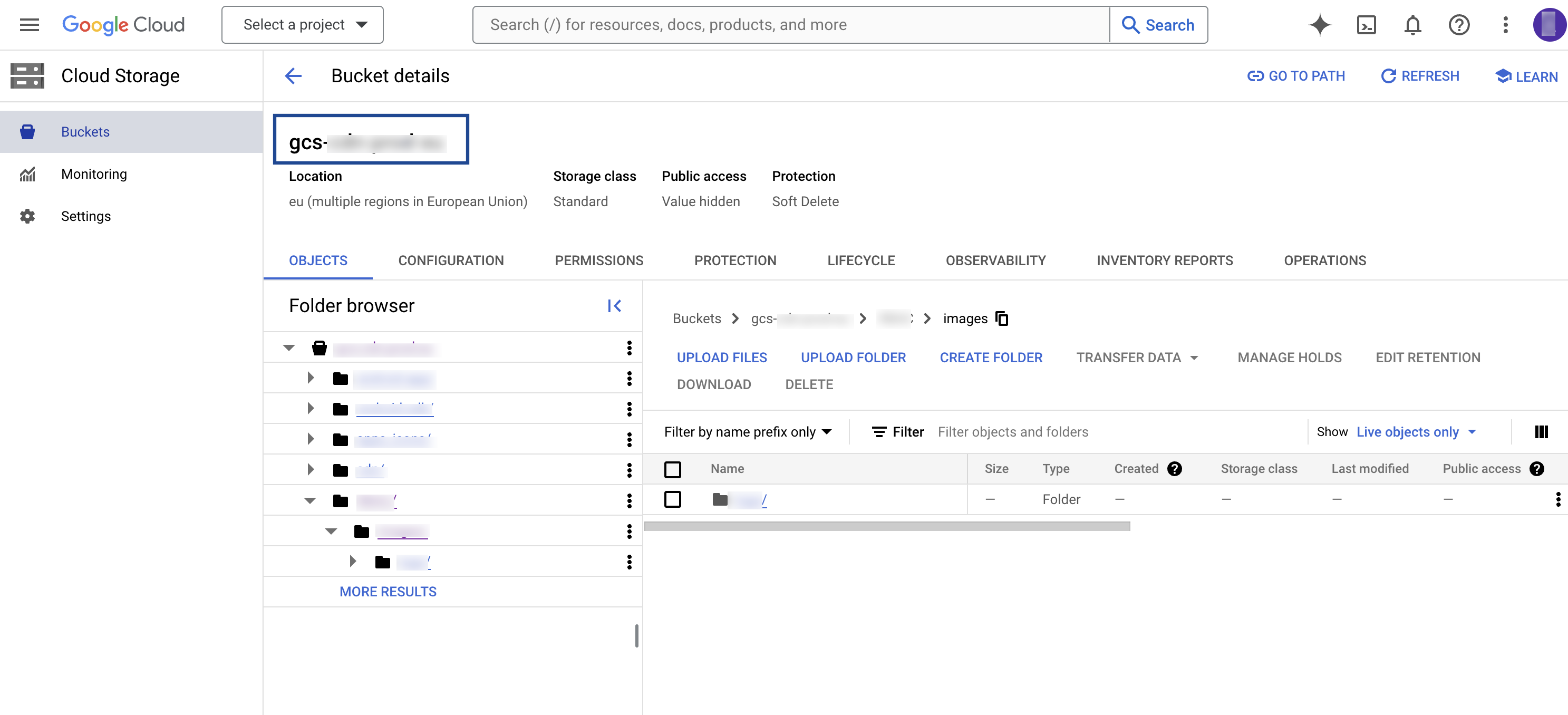

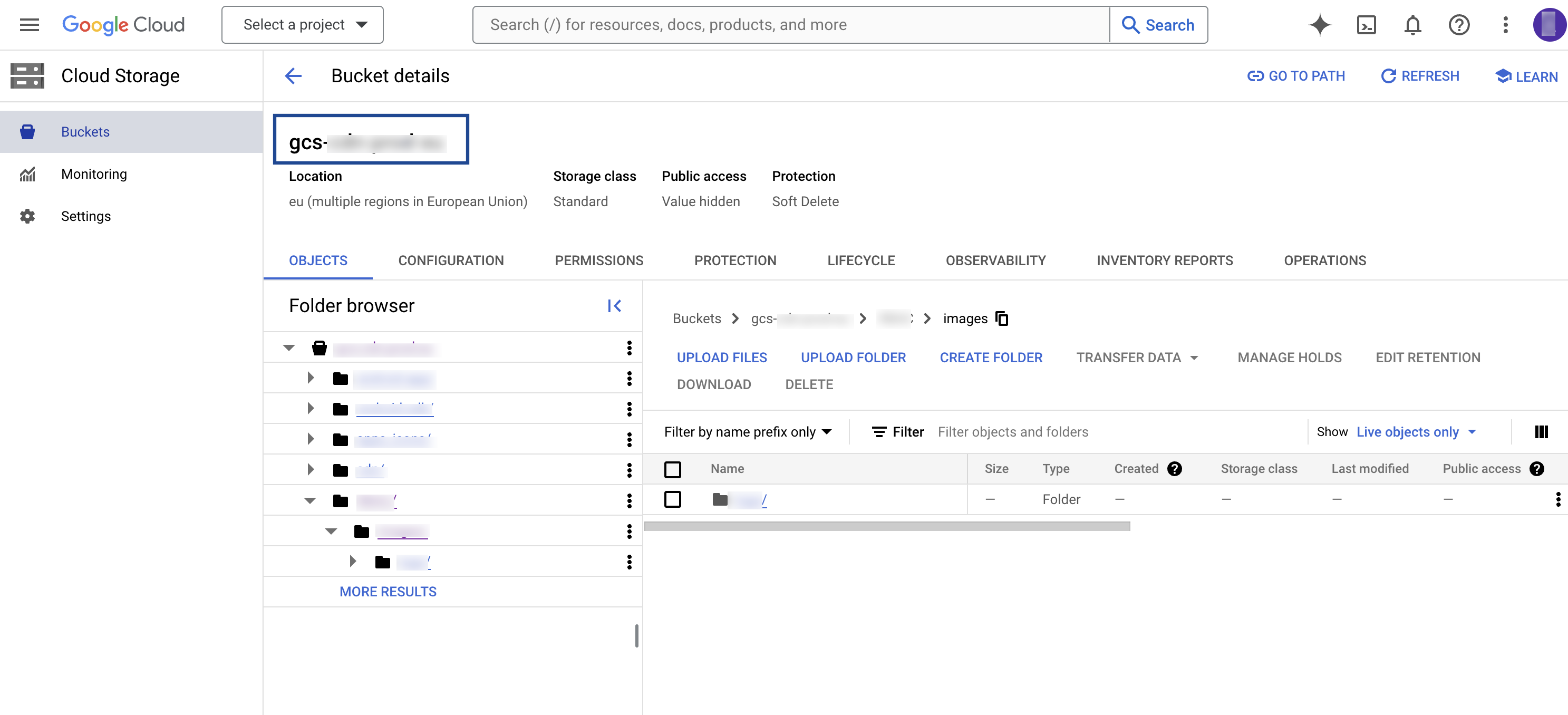

GCP Bucket:The name of the GCS bucket where the exported data will be delivered (e.g., gcs-ireland-all-eu-qa-backend-export-zeotap-com). This is the client’s bucket where the data will be placed before being picked up by Databricks Autoloader into Delta tables.

Service Account:

- Client Service Account JSON: This is the Client Service Account provided by the client, which Zeotap uses to upload transformed audience (segment) data into the Google Cloud Storage (GCS) bucket. The uploaded data is then picked up automatically by Databricks Autoloader for further processing and loading into Databricks tables.

- Zeotap Service Account: This is the Zeotap Service Account that is used to access the Google Cloud Storage account. Ensure that you whitelist the Zeotap Service Account to successfully push audiences (segments) from Zeotap CDP to Google Cloud Storage.

Create a Destination for Databricks

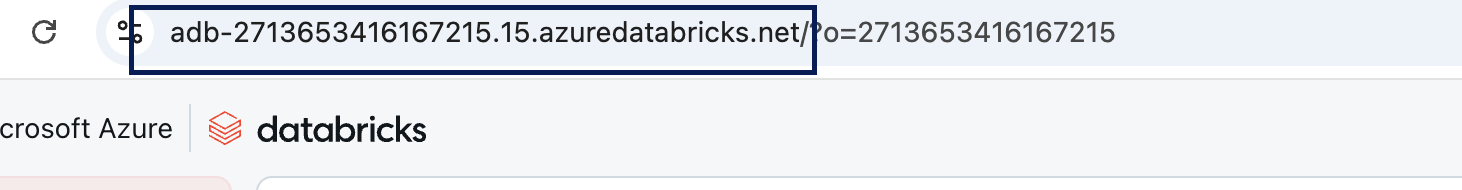

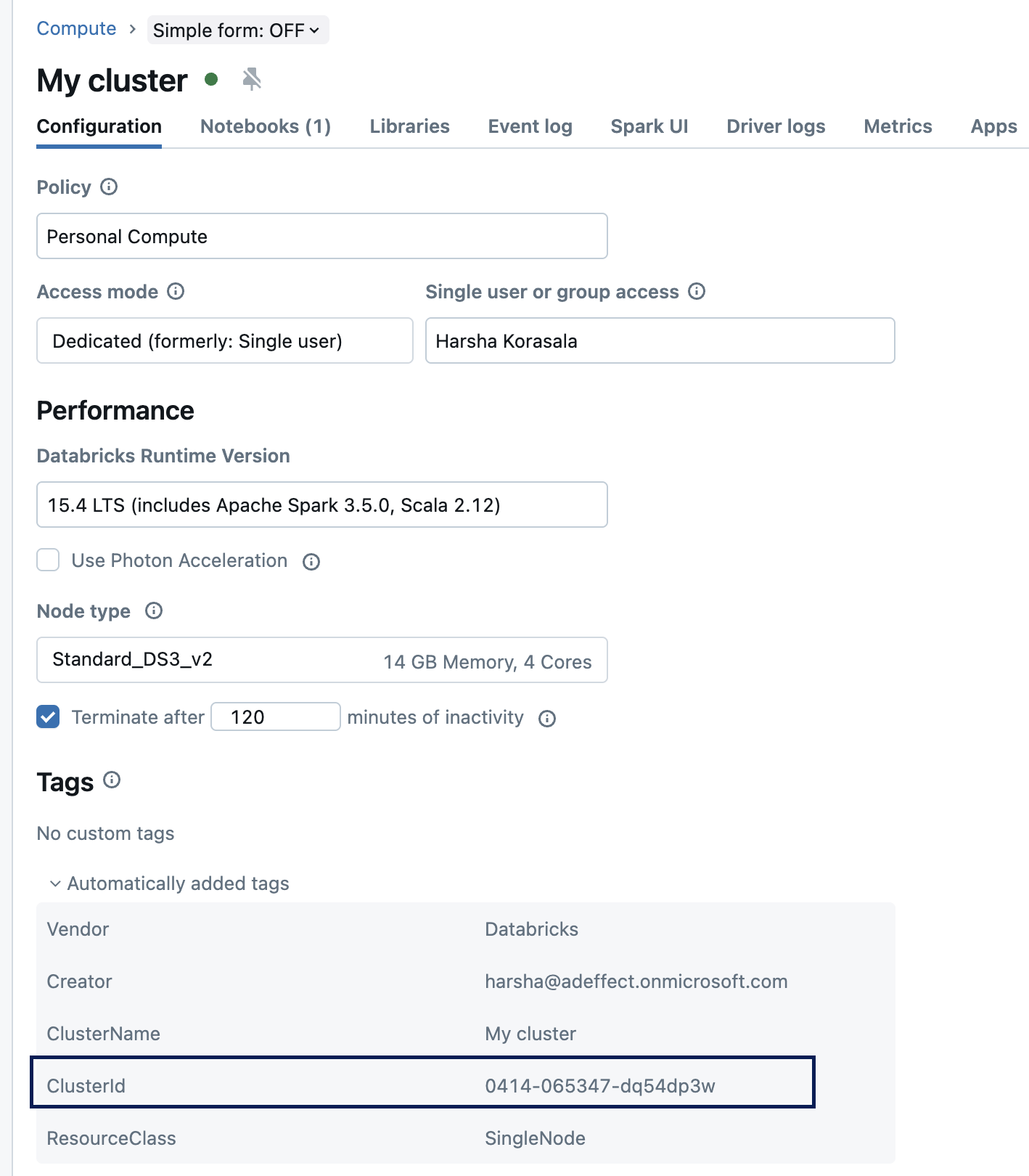

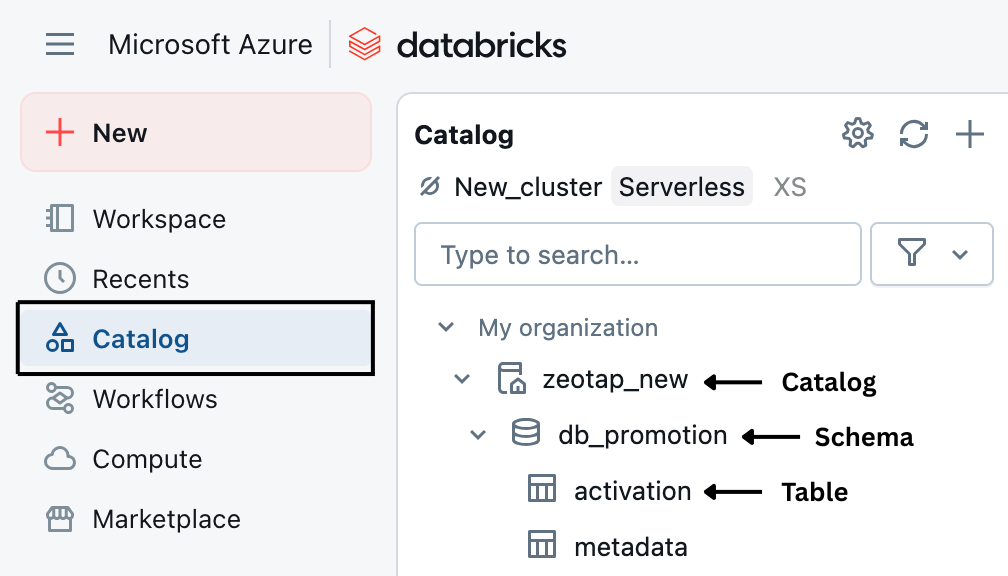

Perform the following steps to create a Destination for Amazon S3:Click Databricks. A screen appears displaying details about the particular destination towards the left. On the right-hand side of the screen find a list of fields that are required for the integration to be established. Provide the required details as mentioned in the following steps:a. Enter a name for the Destination.b. Enter the Destination Instance Name.c. In the Databricks Host field, enter your Databricks workspace URL.d. In the Databricks Access token field, provide the Databricks personal access token for API authentication.e. In the Cluster ID field, enter the Databricks cluster ID where the job should run.f. In the Catalog field, enter the Unity Catalog name used for table registration in Databricks.g. In the Schema field, enter the schema (or database) name in the Databricks metastore.h. In the Table field, enter the table name where the data should be written in Databricks.i. In the Upload Type dropdown, choose the Upload Type to define the kind of connection or location to which you may want to push your data.

- Currently only GCP upload type is supported.

- If you choose Zeotap Service Account as the Account, then ensure that you whitelist the service account provided by Zeotap CDP to push the audiences (segments) from Zeotap CDP to Google Cloud Storage Platform. This service account information auto-populates under Service Account to be Whitelisted as shown in the image below.

- If you choose Client Service Account Json as the Account, then you need to upload the JSON file with the required authentication information using the + Select File option so that Zeotap CDP can push the audiences to Google Cloud Storage Platform.

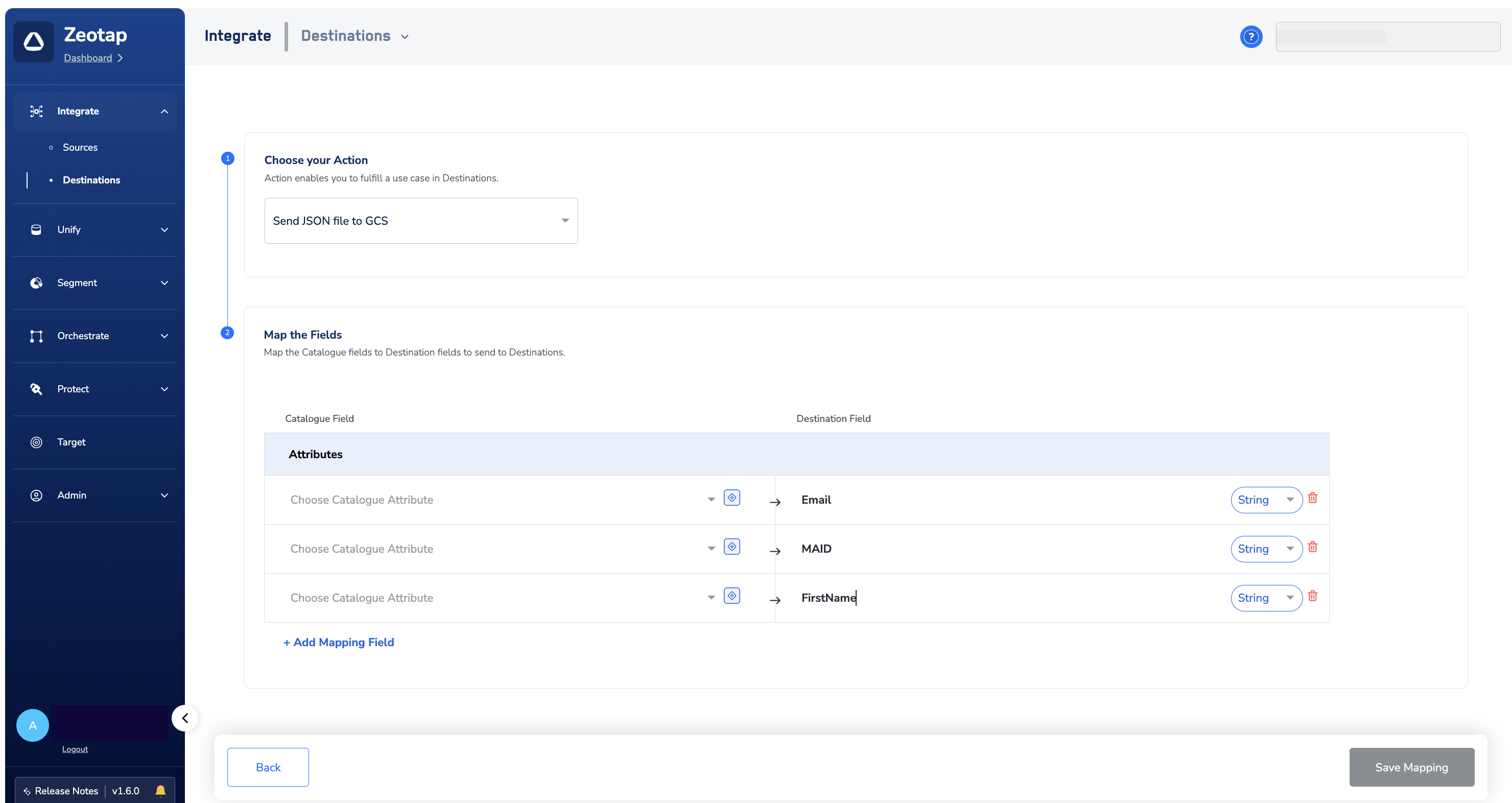

In the new screen that appears, choose the appropriate action and mapping as explained below. Under Choose your Action, Send JSON to GCS as the action for activating your audience (segment) in Audiences.a. You can send any number of identifiers and attributes to your Databricks instance using this action.

.png?fit=max&auto=format&n=99ae7Xt83Dkoy1LQ&q=85&s=8af304a0fb2280e517f24a4a2ad84e21)

.png?fit=max&auto=format&n=99ae7Xt83Dkoy1LQ&q=85&s=f6ada97715a2a4a0469fef53698ac3b9)